Controlling the AI’s Spotlight | The Neural Search Shift

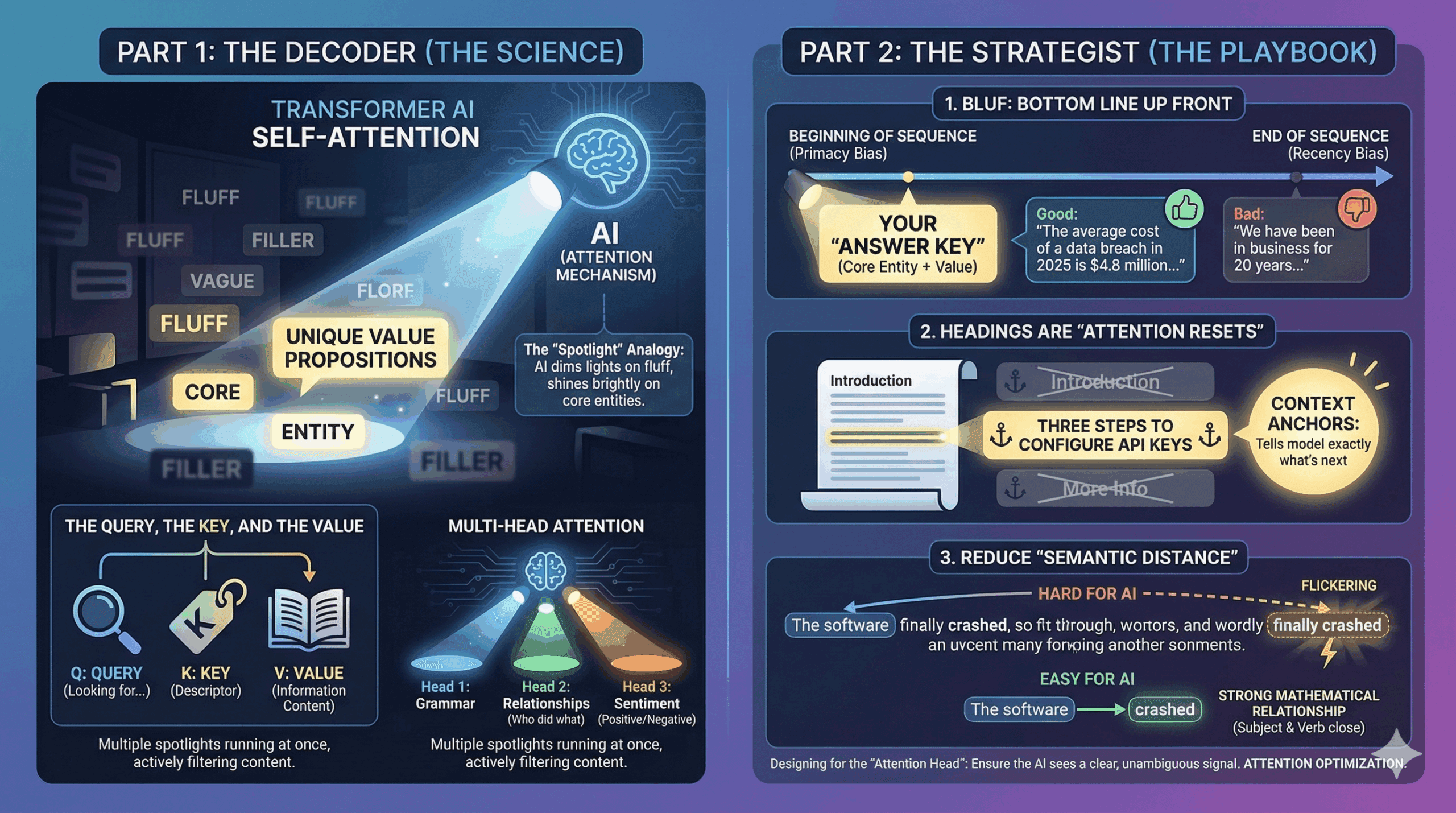

A Transformer reads every word in your article, but it does not treat every word equally. It uses a mechanism called Self-Attention to assign a “weight” to specific parts of your text. Think of it like a spotlight: the AI dims the lights on fluff and shines a bright light on the core entities. In this episode, we learn how to direct that spotlight onto your unique value propositions.

Part 1: The Decoder (The Science)

The Query, The Key, and The Value

Inside a Transformer model, the “Attention Mechanism” works a lot like a database retrieval system, but softer. It breaks information down into three vectors:

- The Query (Q): What the model is looking for.

- The Key (K): The label or descriptor of your content.

- The Value (V): The actual information content.

The “Spotlight” Analogy Imagine a dark room filled with filing cabinets. The AI has a flashlight (Attention).

- If the user asks about “Apple Stock Price,” the AI shines its light.

- It sees the word “Apple.”

- The Conflict: Is this the fruit or the tech giant?

- The Attention Resolution: The model scans the surrounding words (the context). If it sees “orchard” or “pie,” it dims the light. If it sees “Nasdaq” or “iPhone,” it turns the brightness up to 100%.

Multi-Head Attention Modern LLMs use “Multi-Head Attention.” This means they have multiple spotlights running at once.

- Head 1 might focus on grammar.

- Head 2 might focus on the relationship between entities (Who did what).

- Head 3 might focus on the sentiment (Is this positive or negative?).

The Takeaway: The model is actively filtering your content while it reads it. If your context is weak, the spotlight flickers, and the model loses confidence in your answer.

Part 2: The Strategist (The Playbook)

Designing for the “Attention Head”

Your goal is to ensure that when the AI’s spotlight hits your content, it sees a clear, unambiguous signal. We call this Attention Optimization.

1. BLUF: Bottom Line Up Front Journalism has a rule called “Don’t Bury the Lead.” This is now an SEO rule.

- The Mechanism: LLMs tend to pay higher attention to the beginning of a sequence (Primacy Bias) and the end of a sequence (Recency Bias).

- The Strategy: Place your “Answer Key” in the first paragraph.

- Bad: “We have been in business for 20 years and have seen many changes in the market. One thing that remains constant is…” (The spotlight is dimming).

- Good: “The average cost of a data breach in 2025 is $4.8 million. To mitigate this…” (Spotlight is ON. You provided a clear entity and value immediately).

2. Headings are “Attention Resets” In a long document, the AI’s attention can drift (or become diluted).

- The Strategy: Treat your H2s and H3s not just as style, but as Context Anchors.

- A vague header like “Introduction” or “More Info” tells the Attention Head nothing.

- A specific header like “Three Steps to Configure API Keys” acts as a strong “Key” for the model’s “Query.” It tells the model exactly what the next chunk of text is about, increasing the probability of retrieval.

3. Reduce “Semantic Distance” Complex, winding sentences are harder for models to parse confidently.

- Hard for AI: “The software, which was developed by a team in Austin during the late 90s when the tech boom was exploding, finally crashed.” (The distance between “software” and “crashed” is 20 words. The link is weak).

- Easy for AI: “The software crashed. It was developed in Austin during the late 90s.”

- The Strategy: Keep your Subject and your Verb close together. This makes the mathematical relationship between them stronger in the Attention Matrix.

ContentXir Intelligence

The “Ambiguity Penalty” At ContentXir, we analyze content for Structural Clarity. We find that pages with “wandering” narratives—where the topic shifts frequently without clear transition signals—score lower in Generative Search visibility.

Why? Because the AI is risk-averse. If its Attention Heads cannot definitively link “Your Brand” to “The Solution” because of too much intervening fluff, it will choose a competitor with clearer sentence structures. It wants the “Safe Bet.”

Action Item for you: The “First 50 Words” Rewrite.

- Open your top 3 landing pages.

- Read ONLY the first 50 words.

- The Test: If you deleted the rest of the page, would the user still know exactly what you do and who it is for?

- If not, rewrite it. Feed the Attention Head immediately.

Related Insights

How Google I/O 2026 Reshuffled the Search Algorithm—And How ContentXIR Empowers Brands to Dominate the Agentic Web

Google just restructured its search core around Gemini 3.5 Flash, 24/7 background Search Agents, and dynamic Generative UI. Learn how ContentXIR’s causal engine delivers the diagnostic insights needed to survive this new age of SEO.

The Neural Search Shift Season 04: GEO Protocols (The Finale) Episode 07: A/B Testing for Algorithms

We have spent this season re-engineering your content, your code, and your PR for the Generative AI era. But how do you actually know if it is working? In the…

The Neural Search Shift Season 04: GEO Protocols (The New Playbook) Episode 06: The Itinerary Engine

For brick-and-mortar businesses, event spaces, and localized services, the game has fundamentally changed. The “Map Pack” is no longer the final destination. Users are no longer asking search engines to…