The New Crawl Budget | The Neural Search Shift

We have moved out of the “Engine Room” (how the AI thinks) and are now entering the “Library” (how the AI finds you). This season is critical because you cannot be generated if you are not ingested.

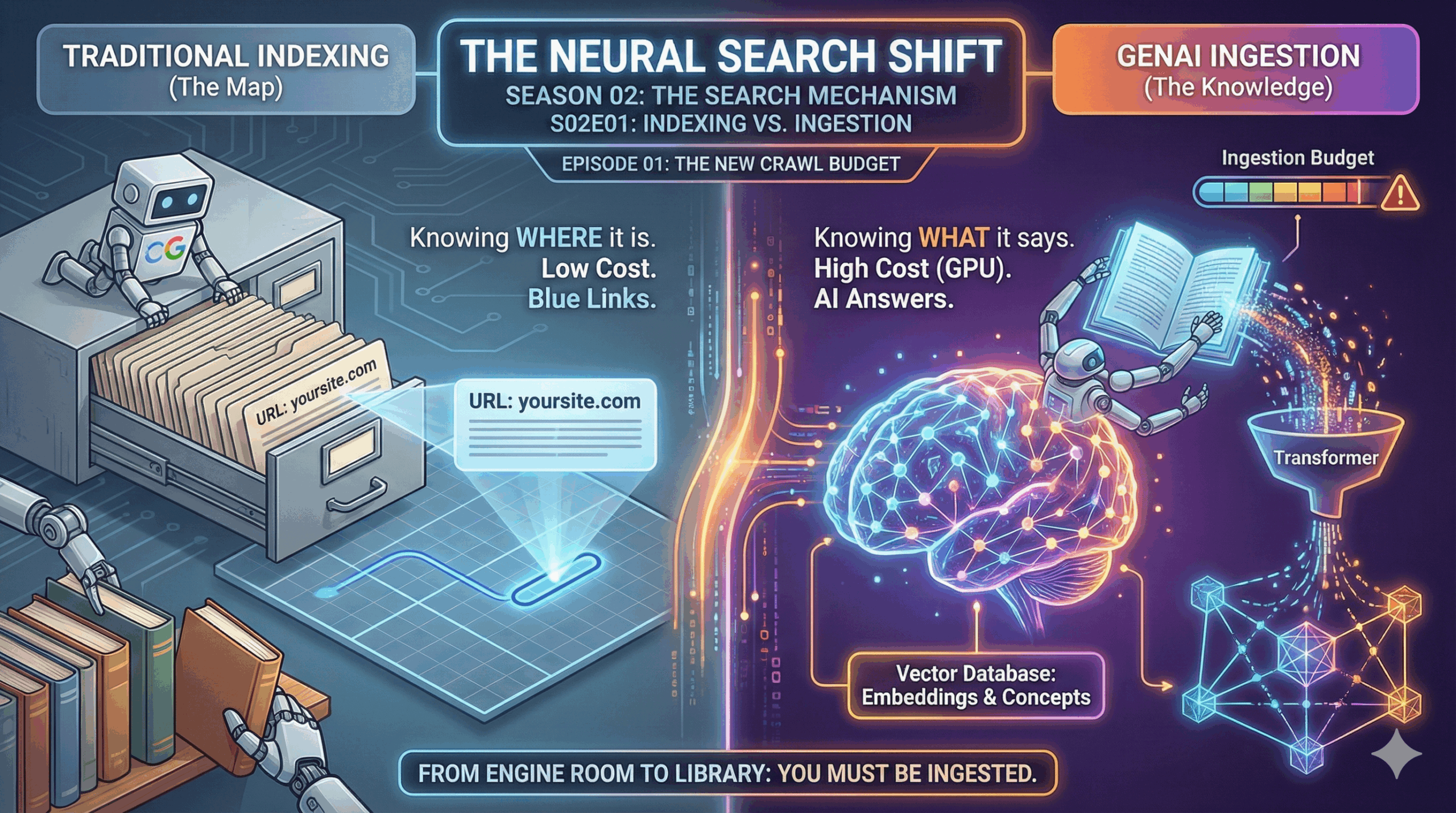

For 25 years, SEOs obsessed over “Indexing”—getting Google to list your URL in its database. But in the GenAI era, being indexed is not enough. You must be Ingested.

There is a massive difference between Google knowing where your page is (Indexing) and Google knowing what your page says (Ingestion). This episode reveals the hidden “Ingestion Budget” that determines if your content gets vectorised for AI answers or just filed away as a blue link.

Part 1: The Decoder (The Science)

** The Phone Book vs. The Brain**

To understand why your content might be missing from AI Overviews or ChatGPT search, you have to distinguish between two distinct processes.

1. Traditional Indexing (The Map)

This is the “Old Google.”

• Process: Googlebot crawls your page, scans the text for keywords, and adds your URL to a massive “Inverted Index.”

• The Analogy: It’s like a librarian adding your book title and location to the card catalog. The librarian hasn’t read the book; they just know which shelf it’s on.

• Cost: Low. Storing a link and a few keywords is cheap.

2. GenAI Ingestion (The Knowledge)

This is the “New Search.”

• Process: The bot crawls the page, scrapes the full text, cleans the HTML code, breaks the text into tokens, runs it through a Transformer, converts it into Vector Embeddings (math), and stores it in a Vector Database.

• The Analogy: The librarian actually reads your book, highlights the key facts, summarizes the chapters, and memorizes the concepts.

• Cost: Extremely High. This requires massive GPU compute power.

The Harsh Reality:

Because Ingestion is expensive, search engines are picky. They might Index 100% of your site (make it searchable), but only Ingest 10% of it (make it available for AI answers). If your content is hard to read, they won’t pay the bill to ingest it.

Part 2: The Strategist (The Playbook)

Optimizing for Ingestion Efficiency

Your goal is to lower the “tax” the search engine pays to read your site. If you are “cheap” to ingest, you get ingested more often.

1. Code-to-Text Ratio

GenAI bots hate “bloated” code.

• The Problem: If your blog post has 1,000 words of content wrapped in 10,000 lines of messy JavaScript, tracking codes, and inline CSS, the bot has to burn energy digging through the code to find the text.

• The Strategy: Technical Minimalism. Move CSS and JS to external files. Keep the HTML body clean. Use semantic HTML (<article>, <h1>, <p>) so the bot can instantly identify the payload.

2. The JavaScript Trap

Many modern sites rely on Client-Side Rendering (the browser builds the page).

• The Risk: AI bots are often “headless” browsers that don’t execute complex JavaScript as well as Chrome does. If your content requires 5 seconds of script execution to load, the AI bot might just see a blank page and move on.

• The Strategy: Server-Side Rendering (SSR). Serve the finished HTML directly to the bot. Don’t ask the AI to build the webpage; just hand it the document.

3. “Scannability” Signals

How does a bot decide if a page is worth ingesting? It looks for density signals immediately.

• The Strategy: Use a Table of Contents (ToC) at the top.

• Why: A ToC is essentially a “Summary Map” of the content. It tells the bot, “Here is exactly what this page covers.” It lowers the compute required to understand the document structure.

ContentXir Intelligence

The “Ingestion Gap”

We frequently see clients who rank #1 in traditional search (Blue Links) but appear nowhere in AI Overviews.

• This is usually an Ingestion Gap. The page is authoritative enough to rank, but too technically heavy or unstructured to be easily vectorized.

• The Fix: We strip the page down. We remove heavy pop-ups, simplify the DOM structure, and add clear Schema Markup. Suddenly, the AI “sees” it.

Action Item for S02E01:

The “Text-Only” Audit.

1. Go to a page on your site.

2. Use a tool (or a browser extension) to view the “Text Only” version of your cache (Google Search Console > URL Inspection > View Crawled Page).

3. The Test: Do you see your content clearly? Or is it cluttered with menu items, “related posts,” and code snippets?

4. If the text isn’t clean, the AI isn’t reading it.

Next Up on S02E02:

• Title: The Knowledge Graph

• Topic: Google’s secret weapon. Before LLMs, there was the Knowledge Graph. We explain how connecting your brand to “Entities” (People, Places, Things) is the fastest way to trigger an AI Overview.

Related Insights

How Google I/O 2026 Reshuffled the Search Algorithm—And How ContentXIR Empowers Brands to Dominate the Agentic Web

Google just restructured its search core around Gemini 3.5 Flash, 24/7 background Search Agents, and dynamic Generative UI. Learn how ContentXIR’s causal engine delivers the diagnostic insights needed to survive this new age of SEO.

The Neural Search Shift Season 04: GEO Protocols (The Finale) Episode 07: A/B Testing for Algorithms

We have spent this season re-engineering your content, your code, and your PR for the Generative AI era. But how do you actually know if it is working? In the…

The Neural Search Shift Season 04: GEO Protocols (The New Playbook) Episode 06: The Itinerary Engine

For brick-and-mortar businesses, event spaces, and localized services, the game has fundamentally changed. The “Map Pack” is no longer the final destination. Users are no longer asking search engines to…